How Google’s BERT update is affecting search results

In early 2015 Google launched its machine learning artificial intelligence system, RankBrain, to further improve the ranking algorithm for its search results. Google actually launched RankBrain in April 2015 but didn’t announce it until October of the same year.

During that time no one had noticed any difference or that anything was refining the search results in the background.

Fast forward four years and we’re seeing the same effect with Google’s latest and most significant update since RankBrain: BERT.

Google seems to have a habit of giving its updates silly names but BERT is a highly significant and advanced addition to Google’s ranking algorithm.

BERT stands for “Bidirectional Encoder Representations from Transformers” and is an AI based natural language processing (NLP) system. The system enables Google to better understand the relationship of all words in a sentence and, therefore, better understand what the searcher is ultimately trying to find.

Understanding the relationship of words in a sentence sounds like a simple task but it’s actually incredibly complex for computer algorithms to process accurately. The technology behind BERT is so advanced that it’s also required a change in hardware to handle the complex calculations and processes which were pushing the existing hardware to its limits.

How BERT improves search

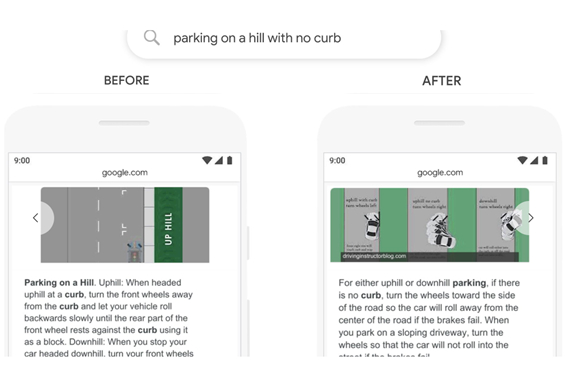

BERT is able to determine the intent behind a search query by understanding the relative significance of the words before and after each word in a search query. Here is an example from Google of BERT in action:

“In the past, a query like this would confuse our systems–we placed too much importance on the word “curb” and ignored the word “no”, not understanding how critical that word was to appropriately responding to this query. So we’d return results for parking on a hill with a curb!”

For more examples see Google’s news post about BERT.

When you look at the examples of the change in results with BERT’s input, you’ll see that the results are now completely different. It’s not like with regular Google updates where results change around a bit, with BERT the results returned for the search queries are completely different.

This is why BERT is the most significant change to Google’s algorithm since the introduction of RankBrain.

BERT isn’t refining the search results; it’s completely changing them to make them relevant and more accurate.

Key takeaways

BERT is using machine learning which means that it will continue to improve over time, resulting in an increasingly intelligent language learning system. It also means that the search results will continuously become more accurate and relevant over time.

However, note that BERT will mainly impact longer tail search phrases where the relationship between the words is vital in order to return the correct results. For short phrases it’s likely to have very little impact.

Google estimates that BERT will have an impact on 10% of all search queries and at the moment it is only impacting English queries in the US. It will begin to roll out to other countries and languages over the coming weeks and months.

BERT is also being applied to featured snippets as so many of these are often generated through longer tail search queries. As a result, they should be much more relevant than they are today.

On a final note, it’s important to understand that there really isn’t anything that you can do to optimise for BERT apart from having the relevant content for a given search query. If you were previously ranking top for a specific search and with BERT it no longer is, it’s probably because Google was previously showing the wrong results.

We positively welcome BERT and the positive impact that it will have on the quality of long tail search results. The impact may not be obvious to most searchers or SEOs but it’s Google’s biggest update in over four years and a very exciting one at that.