SEO Insights – November 2020 Report

November is generally a critical month for a lot of businesses that generate sales online, and last month was possibly even more vital than ever after what has been a very turbulent year for many businesses.

Initial feedback shows that online sales hit record levels in November, particularly around Black Friday when products are heavily discounted. At the same time, it appears that in-person store visits are at an all-time low due to various restrictions and lockdowns in place.

As we write this the Arcadia group has announced that it is going into administration, as is Debenhams. This is sadly leading to huge job losses but these are examples of physical businesses which have not adapted well or quickly enough to the major shift to online shopping.

In contrast, Next has been heavily focused on their web presence as have M&S and even during these difficult times, they appear to still be performing well.

There has probably never been a more important time than now for businesses to ensure that they have an established and prominent online presence. It is extremely sad to see empty highstreets but the world has changed, online shopping is increasing by the day and the trend is likely to continue.

Now, in other (more Search related) news….

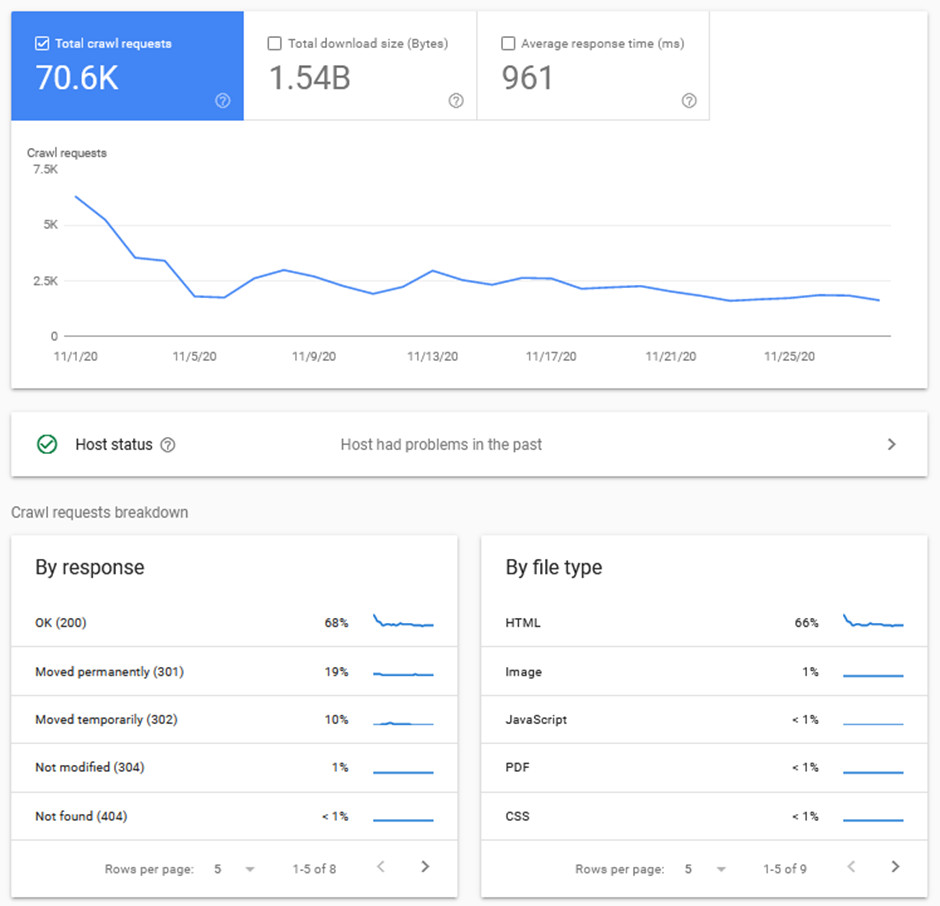

Crawl Stats Report

Google has released a new Crawl Stats Report in Search Console. This report gives an insight into how often, how quickly and how deep Google is crawling a website.

Understanding crawl behaviour (Google’s discovery of pages) can help to diagnose website issues that may be leading to ranking losses, such as server downtime or the inability for Google to fully download resources.

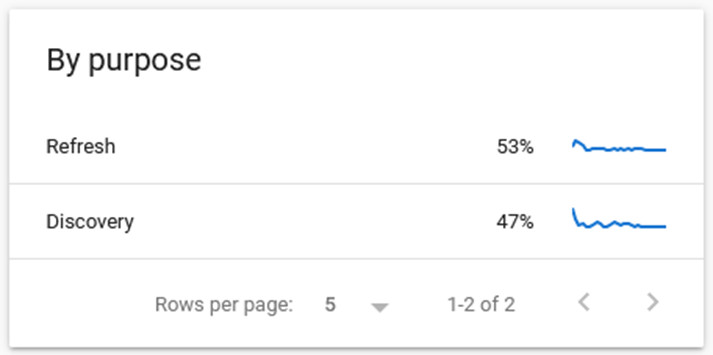

There is a new element to this report now which is quite interesting. It shows the stats for pages which are re-crawled (refreshed) and those which are newly discovered:

This is particularly useful to understand how often pages are re-crawled and how long it takes pages to be discovered.

For example, an important page may only be re-crawled once per month because the content is quite static. If the content is updated on a regular basis this would lead Google to re-crawl it more often, which is important for freshness in the search results and to have new content indexed quickly. We can monitor these crawl rates in this new report.

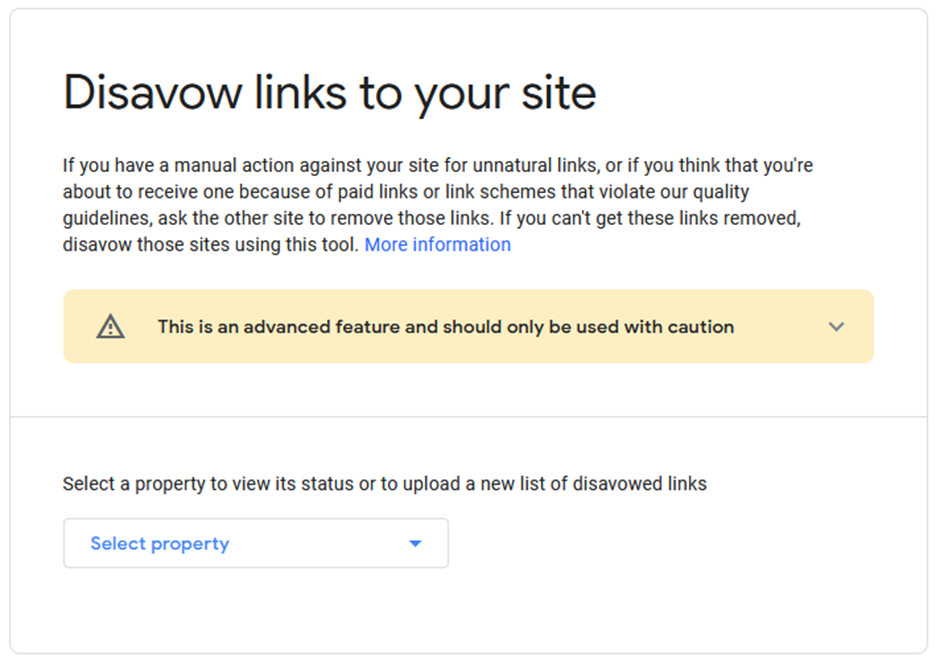

New and Improved Link Disavow Tool

Google has now migrated the link disavow tool to the new Search Console interface. This tool is used to tell Google to ignore certain links pointing to a website, such as spam links and paid links.

It now features a friendlier user interface, the ability to download the disavow file as a text file and there are error reports now showing more than 10 errors.

As the warning says…this is an advanced tool and should be used with caution.

Google is really very good now at ignoring spam links pointing to a website. It’s mostly not necessary for any website to use this tool. In fact, you can inadvertently tell Google to ignore perfectly legitimate links to your website if you don’t realise that those links are actually helping your website to rank and not harming it. Disavowing good links can lead to ranking losses.

However, sometimes there can be an overwhelming number of spam links that could be causing an issue for the website, in which case it is fine to use the tool as it is when a website has a manual penalty when Google detects that a website has been buying links; a practice that goes against Google’s Webmaster Guidelines.

This is not a tool that we are ever likely to use for your website.

Both of these tools, as well as all of the others in Search Console are what we use and monitor for all clients on a regular basis to ensure their websites are performing at their best at all times.